跳到内容

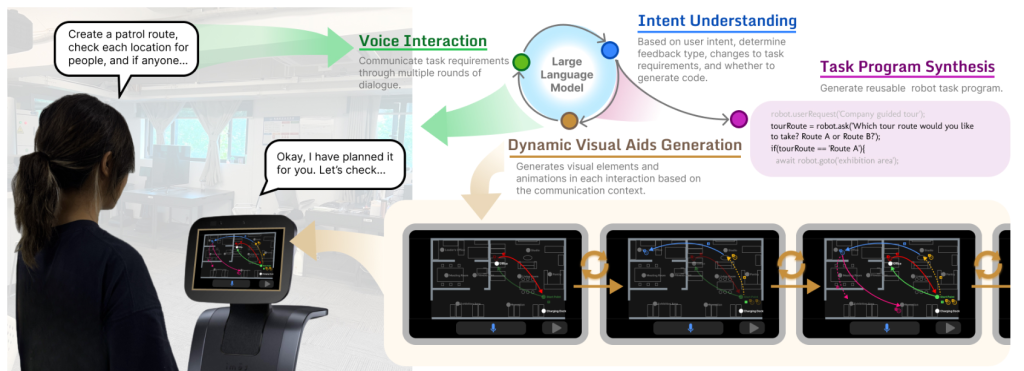

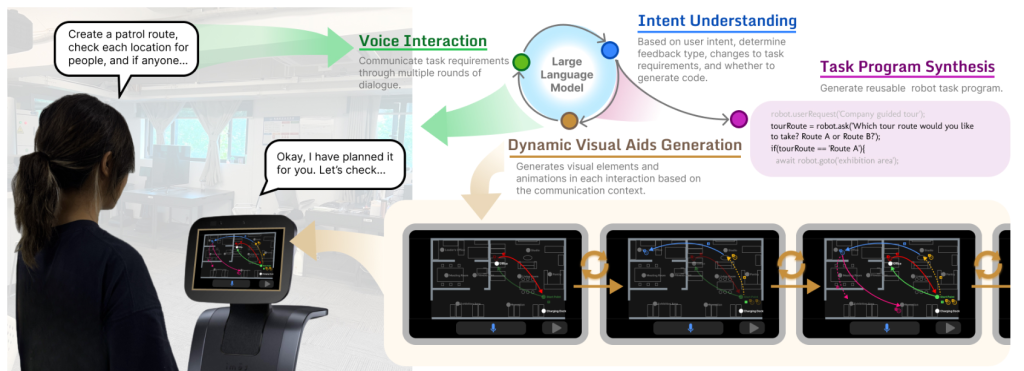

This work investigates the integration of generative visual aids in human-robot task communication. We developed GenComUI, a system powered by large language models (LLMs) that dynamically generates contextual visual aids—such as map annotations, path indicators, and animations—to support verbal task communication and facilitate the generation of customized task programs for the robot. This system was informed by a formative study that examined how humans use external visual tools to assist verbal communication in spatial tasks. To evaluate its effectiveness, we conducted a user experiment (n = 20) comparing GenComUI with a voice-only baseline. The results demonstrate that generative visual aids, through both qualitative and quantitative analysis, enhance verbal task communication by providing continuous visual feedback, thus promoting natural and effective human-robot communication. Additionally, the study offers a set of design implications, emphasizing how dynamically generated visual aids can serve as an effective communication medium in human-robot interaction. These findings underscore the potential of generative visual aids to inform the design of more intuitive and effective human-robot communication, particularly for complex communication scenarios in human-robot interaction and LLM-based end-user development.[......]

This work investigates the integration of generative visual aids in human-robot task communication. We developed GenComUI, a system powered by large language models (LLMs) that dynamically generates contextual visual aids—such as map annotations, path indicators, and animations—to support verbal task communication and facilitate the generation of customized task programs for the robot. This system was informed by a formative study that examined how humans use external visual tools to assist verbal communication in spatial tasks. To evaluate its effectiveness, we conducted a user experiment (n = 20) comparing GenComUI with a voice-only baseline. The results demonstrate that generative visual aids, through both qualitative and quantitative analysis, enhance verbal task communication by providing continuous visual feedback, thus promoting natural and effective human-robot communication. Additionally, the study offers a set of design implications, emphasizing how dynamically generated visual aids can serve as an effective communication medium in human-robot interaction. These findings underscore the potential of generative visual aids to inform the design of more intuitive and effective human-robot communication, particularly for complex communication scenarios in human-robot interaction and LLM-based end-user development.[......]继续阅读

End-user development allows everyday users to tailor service robots or applications to their needs. One user-friendly approach is natural language programming. However, it encounters challenges such as an expansive user expression space and limited support for debugging and editing, which restrict its application in end-user programming. The emergence of large language models (LLMs) offers promising avenues for the translation and interpretation between human language instructions and the code executed by robots, but their application in end-user programming systems requires further study. We introduce Cocobo, a natural language programming system with interactive diagrams powered by LLMs. Cocobo employs LLMs to understand users’ authoring intentions, generate and explain robot programs, and facilitate the conversion between executable code and flowchart representations. Our user study shows that Cocobo has a low learning curve, enabling even users with zero coding experience to customize robot programs successfully.

[......]

End-user development allows everyday users to tailor service robots or applications to their needs. One user-friendly approach is natural language programming. However, it encounters challenges such as an expansive user expression space and limited support for debugging and editing, which restrict its application in end-user programming. The emergence of large language models (LLMs) offers promising avenues for the translation and interpretation between human language instructions and the code executed by robots, but their application in end-user programming systems requires further study. We introduce Cocobo, a natural language programming system with interactive diagrams powered by LLMs. Cocobo employs LLMs to understand users’ authoring intentions, generate and explain robot programs, and facilitate the conversion between executable code and flowchart representations. Our user study shows that Cocobo has a low learning curve, enabling even users with zero coding experience to customize robot programs successfully.

[......]继续阅读

Autonomous agents, including service robots, require adherence to moral values, legal regulations, and social norms to interact effectively with humans. A vital aspect of this is the acquisition of ownership relationships between humans and their carrying items, which leads to practical benefits and a deeper understanding of human social norms. The proposed framework enables the robots to learn item ownership relationships autonomously or through user interaction. The autonomous learning component is based on Human-Object Interaction (HOI) detection, through which the robot acquires knowledge of item ownership by recognizing correlations between human-object interactions. The interactive learning component allows for natural interaction between users and the robot, enabling users to demonstrate item ownership by presenting items to the robot. The learning process has been divided into four stages to address the challenges posed by changing item ownership in real-world scenarios. While many aspects of ownership relationship learning remain unexplored, this research aims to explore and design general approaches to item ownership learning in service robots concerning their applicability and robustness. In future work, we will evaluate the performance of the proposed framework through a case study.[......]

Autonomous agents, including service robots, require adherence to moral values, legal regulations, and social norms to interact effectively with humans. A vital aspect of this is the acquisition of ownership relationships between humans and their carrying items, which leads to practical benefits and a deeper understanding of human social norms. The proposed framework enables the robots to learn item ownership relationships autonomously or through user interaction. The autonomous learning component is based on Human-Object Interaction (HOI) detection, through which the robot acquires knowledge of item ownership by recognizing correlations between human-object interactions. The interactive learning component allows for natural interaction between users and the robot, enabling users to demonstrate item ownership by presenting items to the robot. The learning process has been divided into four stages to address the challenges posed by changing item ownership in real-world scenarios. While many aspects of ownership relationship learning remain unexplored, this research aims to explore and design general approaches to item ownership learning in service robots concerning their applicability and robustness. In future work, we will evaluate the performance of the proposed framework through a case study.[......]继续阅读

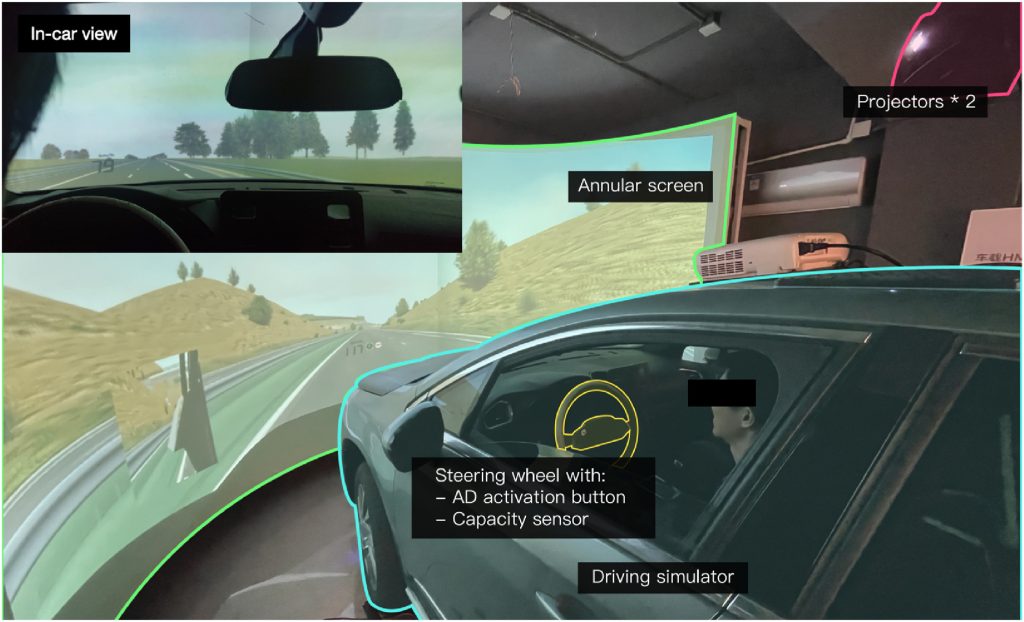

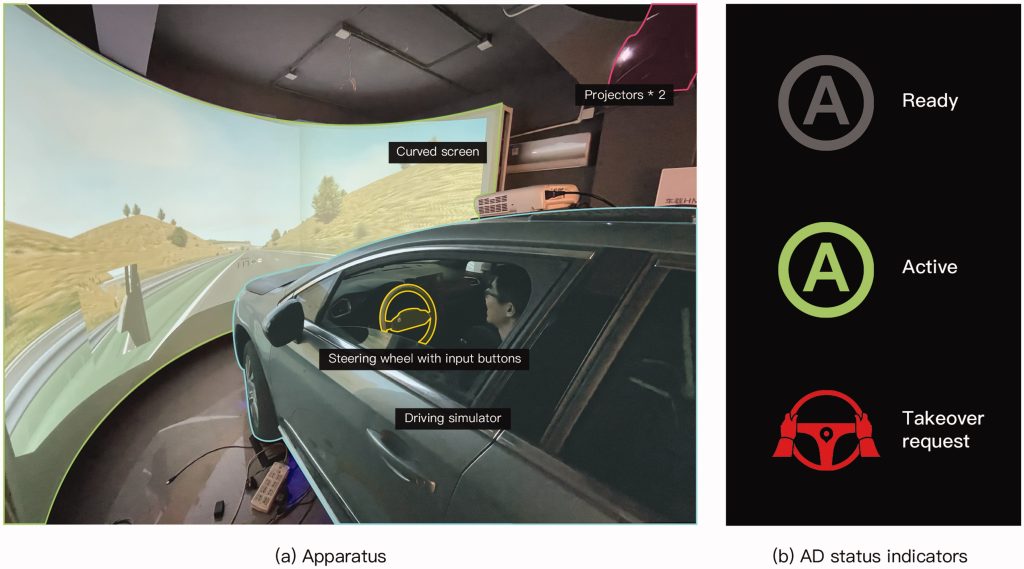

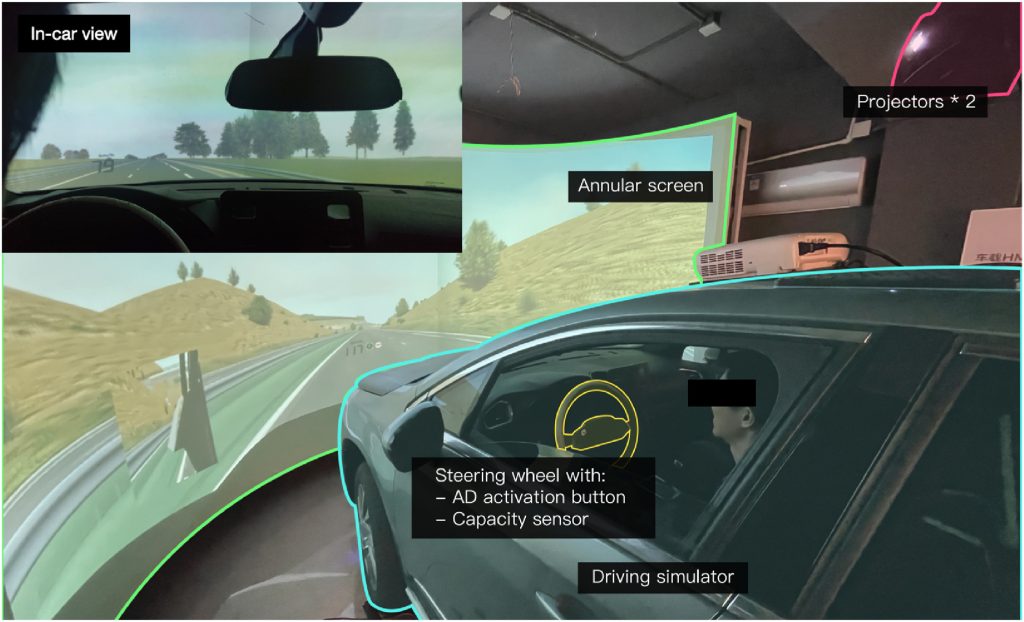

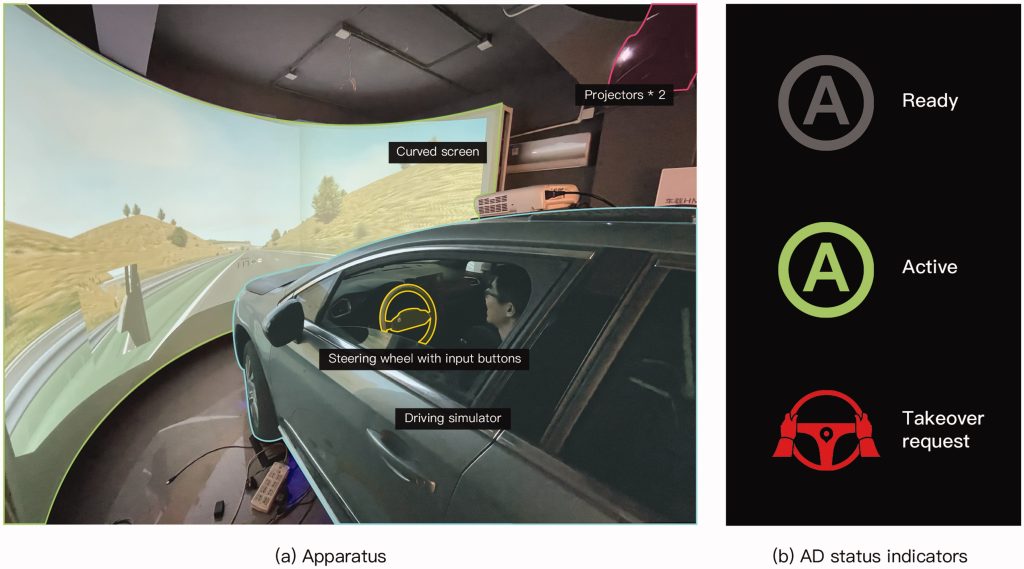

This study investigated how drivers can manage the take-over when silent or alerted failure of automated lateral control occurs after monotonous hands-off driving with partial automation. Twenty-two drivers with varying levels of prior ADAS experience participated in the driving simulator experiment. The failures were injected into the driving scenario on curved road segments, accompanied by either a visual-auditory alert or no change in HMI. Results indicated that drivers could rarely maintain lane-keeping when automated steering was disabled silently, but most drivers safely managed the alerted failure situation within the ego-lane. The silent failure yielded significantly longer take-over time and generally worse lateral control quality. In contrast, poor longitudinal control performance was observed in alerted conditions due to more brake usage. An expert-based controllability assessment method was introduced to this study. The silent lateral failure situation during monotonous hands-off driving was rated as uncontrollable, while the alerted situation was basically controllable. Participants showed their preferences for the TORs, and the importance of conveying TOR reasons was also demonstrated. [......]

This study investigated how drivers can manage the take-over when silent or alerted failure of automated lateral control occurs after monotonous hands-off driving with partial automation. Twenty-two drivers with varying levels of prior ADAS experience participated in the driving simulator experiment. The failures were injected into the driving scenario on curved road segments, accompanied by either a visual-auditory alert or no change in HMI. Results indicated that drivers could rarely maintain lane-keeping when automated steering was disabled silently, but most drivers safely managed the alerted failure situation within the ego-lane. The silent failure yielded significantly longer take-over time and generally worse lateral control quality. In contrast, poor longitudinal control performance was observed in alerted conditions due to more brake usage. An expert-based controllability assessment method was introduced to this study. The silent lateral failure situation during monotonous hands-off driving was rated as uncontrollable, while the alerted situation was basically controllable. Participants showed their preferences for the TORs, and the importance of conveying TOR reasons was also demonstrated. [......]继续阅读

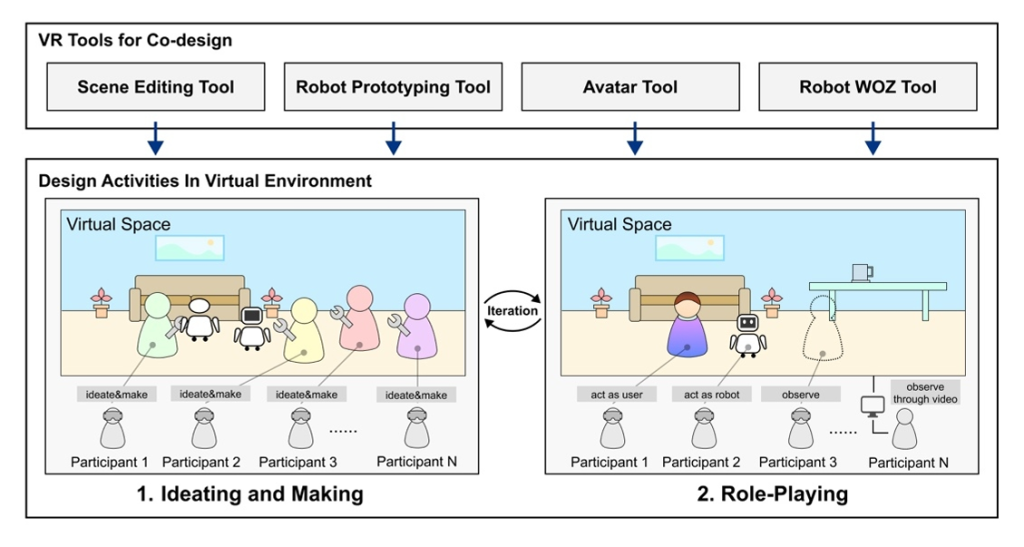

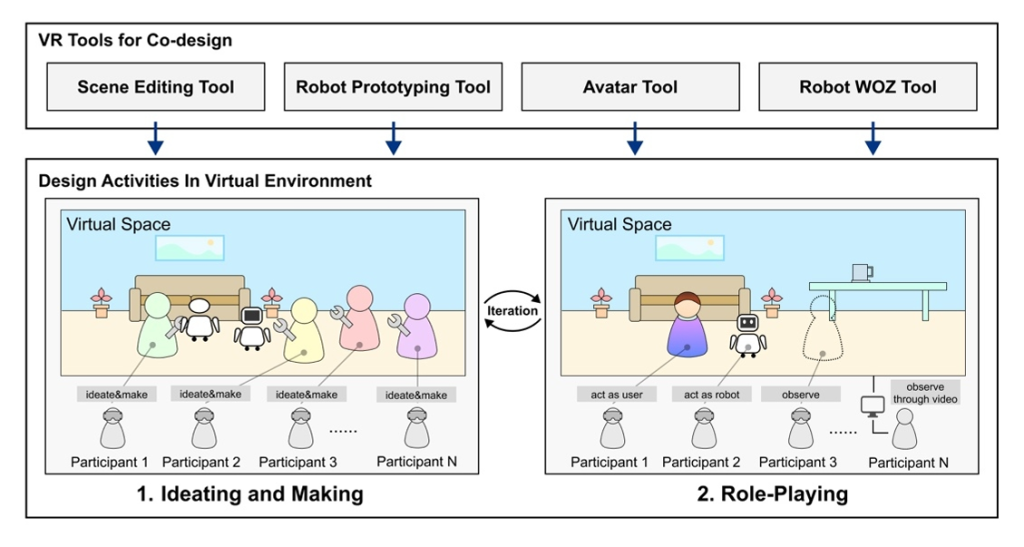

Service robots have been applied in an increasing number of scenarios, including homes, hospitals, offices, schools, hotels, etc. To ensure the usability of the interaction interface and process in the application of service robots, the design of human-robot interaction in the application of service robots involves various interaction modalities, different robot forms, and different physical behaviors of robots in space, etc. This makes it challenging to have low-cost, high-fidelity prototype methods that support design exploration in the early stages of service robot application design.Currently, many rapid prototyping techniques have been applied to the design exploration stage in the design of service robot applications, such as paper prototypes, storyboards, video prototypes, etc. However, these methods have limitations, including low fidelity and being out of the environmental context. Researchers have also been exploring new prototype methods to meet the design exploration and testing requirements for HRI design. Some studies have explored the use of VR test HRI prototypes, and most of them focus on the technical aspect, and the performance of HRI capabilities. Other studies focus on specific HRI design aspects: interactive mechanism, anthropomorphic appearance, social acceptance, and so on. Approaches focusing on these aspects are not suitable for prototyping and testing the overall interaction scenarios of service robot applications.Based on the characteristics of virtual reality technology, this paper aims to explore how virtual reality technology can be used to support multi-user collaborative design of service robot applications in a virtual environment. To address this issue, this paper proposes a system for supporting collaborative design of service robot applications. The system framework and implementation will be described in detail in the paper. Specifically, the system enables multiple users (designers or stakeholders) to enter a virtual environment in real-time using head-mounted VR devices. Users can select appropriate environment model assets based on the target application scenario and perform bodystorming "on site". Using a modular robot building tool, users can add virtual robots to the space and add or delete functional components, as well as adjust the position, size, and orientation of the components. The system's Wizard-of-OZ module allows users to control the robot's movement and component status. The Graphic UI is embedded into the physical display of the robot via WebView and supports the simulation of the Graphic UI interaction process. After completing the initial application concept ideation and virtual robot design, users can use WoZ and Role-Playing techniques to perform the human-robot interaction process to evaluate and optimize the interaction design. In addition, the recorded video of the performance can also support subsequent design discussions.The system is implemented using the Unity game engine, and users interact with the system using the Oculus Quest headsets and controllers. The design activities case based on the system will be evaluated and discussed to analyze the strengths and weaknesses of the system. Further, we discuss the limitations of this work and the future research directions for supporting the design of service robot applications using virtual reality.[......]

Service robots have been applied in an increasing number of scenarios, including homes, hospitals, offices, schools, hotels, etc. To ensure the usability of the interaction interface and process in the application of service robots, the design of human-robot interaction in the application of service robots involves various interaction modalities, different robot forms, and different physical behaviors of robots in space, etc. This makes it challenging to have low-cost, high-fidelity prototype methods that support design exploration in the early stages of service robot application design.Currently, many rapid prototyping techniques have been applied to the design exploration stage in the design of service robot applications, such as paper prototypes, storyboards, video prototypes, etc. However, these methods have limitations, including low fidelity and being out of the environmental context. Researchers have also been exploring new prototype methods to meet the design exploration and testing requirements for HRI design. Some studies have explored the use of VR test HRI prototypes, and most of them focus on the technical aspect, and the performance of HRI capabilities. Other studies focus on specific HRI design aspects: interactive mechanism, anthropomorphic appearance, social acceptance, and so on. Approaches focusing on these aspects are not suitable for prototyping and testing the overall interaction scenarios of service robot applications.Based on the characteristics of virtual reality technology, this paper aims to explore how virtual reality technology can be used to support multi-user collaborative design of service robot applications in a virtual environment. To address this issue, this paper proposes a system for supporting collaborative design of service robot applications. The system framework and implementation will be described in detail in the paper. Specifically, the system enables multiple users (designers or stakeholders) to enter a virtual environment in real-time using head-mounted VR devices. Users can select appropriate environment model assets based on the target application scenario and perform bodystorming "on site". Using a modular robot building tool, users can add virtual robots to the space and add or delete functional components, as well as adjust the position, size, and orientation of the components. The system's Wizard-of-OZ module allows users to control the robot's movement and component status. The Graphic UI is embedded into the physical display of the robot via WebView and supports the simulation of the Graphic UI interaction process. After completing the initial application concept ideation and virtual robot design, users can use WoZ and Role-Playing techniques to perform the human-robot interaction process to evaluate and optimize the interaction design. In addition, the recorded video of the performance can also support subsequent design discussions.The system is implemented using the Unity game engine, and users interact with the system using the Oculus Quest headsets and controllers. The design activities case based on the system will be evaluated and discussed to analyze the strengths and weaknesses of the system. Further, we discuss the limitations of this work and the future research directions for supporting the design of service robot applications using virtual reality.[......]继续阅读

Explanations have become increasingly vital in communicating with human drivers about the reasons for the decision-making of autonomous vehicles (AVs), particularly in tactical-level driving tasks. Focusing on lane-changing scenarios, we examine whether providing tactical-level explanations and in addition, whether providing a confirmation option, influences drivers’ decision-making, trust, and emotional experience. Thirty participants were equally assigned into three groups: indicator (I), explanation (E), and explanation + confirmation (EC), experiencing four lane-changing scenarios in a driving simulator. Real-time question probes and interviews were adopted to understand drivers’ decision-making process, and post-drive questionnaires on trust and emotional experience were given. Results indicated that merely providing tactical-level explanations had little effect on driver’s trust and experience, but caused worse decision-making performance. The option to confirm lane changes after an explanation promoted driver’s trust, but brought two-sided effects on decision-making performance. Situational trust and decision-making performance varied significantly across lane-changing scenarios.[......]

Explanations have become increasingly vital in communicating with human drivers about the reasons for the decision-making of autonomous vehicles (AVs), particularly in tactical-level driving tasks. Focusing on lane-changing scenarios, we examine whether providing tactical-level explanations and in addition, whether providing a confirmation option, influences drivers’ decision-making, trust, and emotional experience. Thirty participants were equally assigned into three groups: indicator (I), explanation (E), and explanation + confirmation (EC), experiencing four lane-changing scenarios in a driving simulator. Real-time question probes and interviews were adopted to understand drivers’ decision-making process, and post-drive questionnaires on trust and emotional experience were given. Results indicated that merely providing tactical-level explanations had little effect on driver’s trust and experience, but caused worse decision-making performance. The option to confirm lane changes after an explanation promoted driver’s trust, but brought two-sided effects on decision-making performance. Situational trust and decision-making performance varied significantly across lane-changing scenarios.[......]继续阅读

Benefit from the progress in the field of explainable artificial intelligence (XAI), explanations have been increasingly prospective in the autonomous vehicle (AV) context. Providing explanations has been proved to be vital for human-AV interaction, but what and how to explain are still to be addressed. This study seeks to bridge the areas of XAI and human-AV interaction by combining perspectives of both users and researchers. In this paper, a conceptual framework of explanation models was proposed to indicate what aspects to explain in human-AV interaction. Based on the framework, we introduced a scenario-based and question-driven method, i.e., the SQX-canvas, to guide the workflow of generating explanations from users’ demands in a certain AV scenario. To make an initial validation of the method, a co-design workshop involving researchers and users was conducted with four AV scenarios provided in forms of video clips. Participants produced explanation concepts and expressed their attitudes towards the AV scenarios following the “scenario, question and explanation” process. It was apparent that users’ demands of explanations varied across scenarios, and findings as well as limitations were discussed. This method could provide implications for research and practice on facilitating transparent human-AV interaction.[......]

Benefit from the progress in the field of explainable artificial intelligence (XAI), explanations have been increasingly prospective in the autonomous vehicle (AV) context. Providing explanations has been proved to be vital for human-AV interaction, but what and how to explain are still to be addressed. This study seeks to bridge the areas of XAI and human-AV interaction by combining perspectives of both users and researchers. In this paper, a conceptual framework of explanation models was proposed to indicate what aspects to explain in human-AV interaction. Based on the framework, we introduced a scenario-based and question-driven method, i.e., the SQX-canvas, to guide the workflow of generating explanations from users’ demands in a certain AV scenario. To make an initial validation of the method, a co-design workshop involving researchers and users was conducted with four AV scenarios provided in forms of video clips. Participants produced explanation concepts and expressed their attitudes towards the AV scenarios following the “scenario, question and explanation” process. It was apparent that users’ demands of explanations varied across scenarios, and findings as well as limitations were discussed. This method could provide implications for research and practice on facilitating transparent human-AV interaction.[......]继续阅读

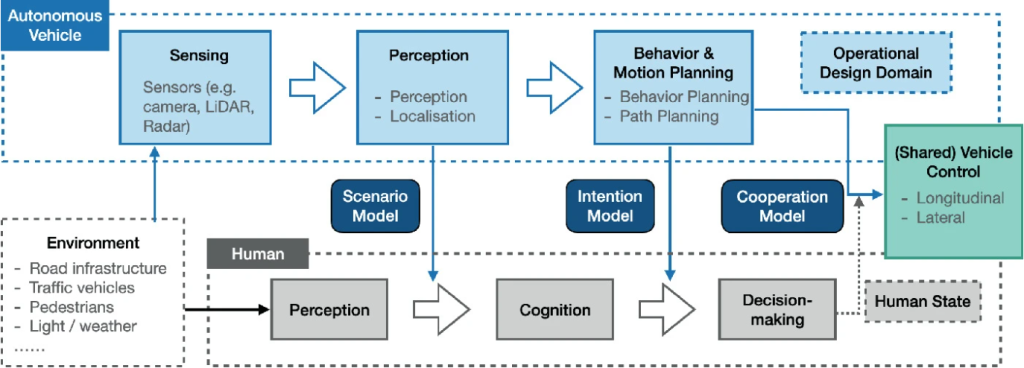

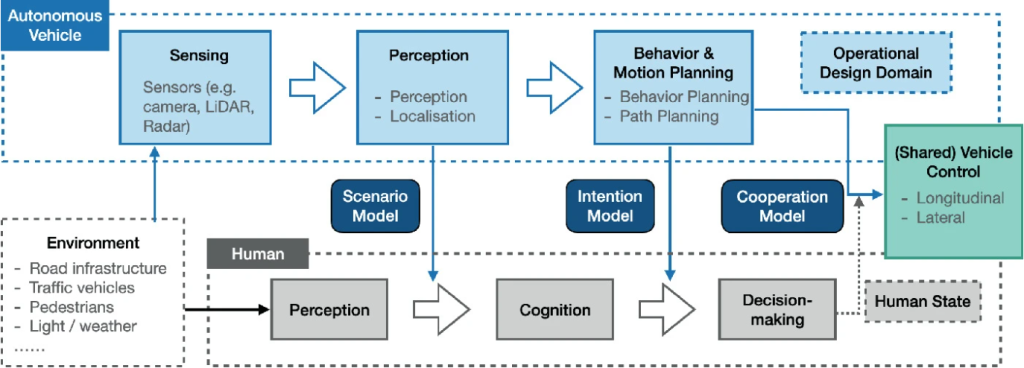

随着现代人工智能技术在自动驾驶系统中的广泛应用, 其可解释性问题日益凸显, 为此探讨人-无人车交互过程中的可解释性交互的框架以及设计要素等问题, 以增强自动驾驶系统的决策透明性, 安全性和用户信任度. 结合可解释人工智能和人机交互的基本理论与方法, 本文首先介绍了可解释性人工智能, 对当前可解释内容的提取方法进行总结, 然后以人-机器人交互的透明度模型为基础, 建立人-无人车交互中可解释性交互的框架. 最后从解释的对象, 方式和评价等多个设计维度对可解释性的交互设计问题进行探讨, 并结合案例进行分析. 可解释性作为人与模型决策之间的接口, 不仅仅是一个人工智能技术问题, 而且与人密切相关, 涉及到人-无人车交互中的多个层次. 本文提出人-无人车交互中可解释性交互的框架, 得出在人-无人车交互每个阶段需要的解释内容以及可解释交互设计的要素.[......]

随着现代人工智能技术在自动驾驶系统中的广泛应用, 其可解释性问题日益凸显, 为此探讨人-无人车交互过程中的可解释性交互的框架以及设计要素等问题, 以增强自动驾驶系统的决策透明性, 安全性和用户信任度. 结合可解释人工智能和人机交互的基本理论与方法, 本文首先介绍了可解释性人工智能, 对当前可解释内容的提取方法进行总结, 然后以人-机器人交互的透明度模型为基础, 建立人-无人车交互中可解释性交互的框架. 最后从解释的对象, 方式和评价等多个设计维度对可解释性的交互设计问题进行探讨, 并结合案例进行分析. 可解释性作为人与模型决策之间的接口, 不仅仅是一个人工智能技术问题, 而且与人密切相关, 涉及到人-无人车交互中的多个层次. 本文提出人-无人车交互中可解释性交互的框架, 得出在人-无人车交互每个阶段需要的解释内容以及可解释交互设计的要素.[......]继续阅读

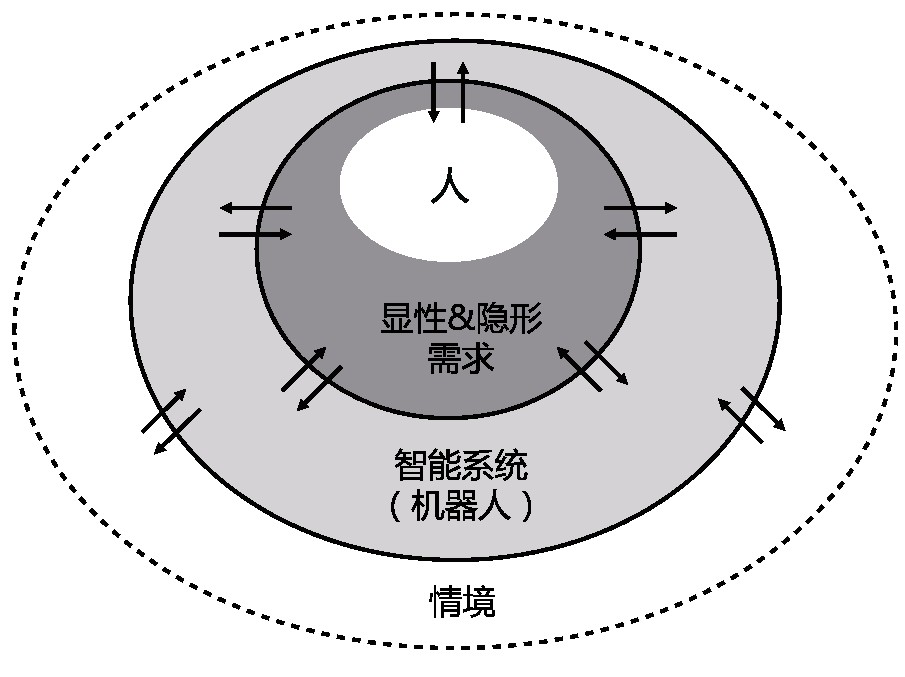

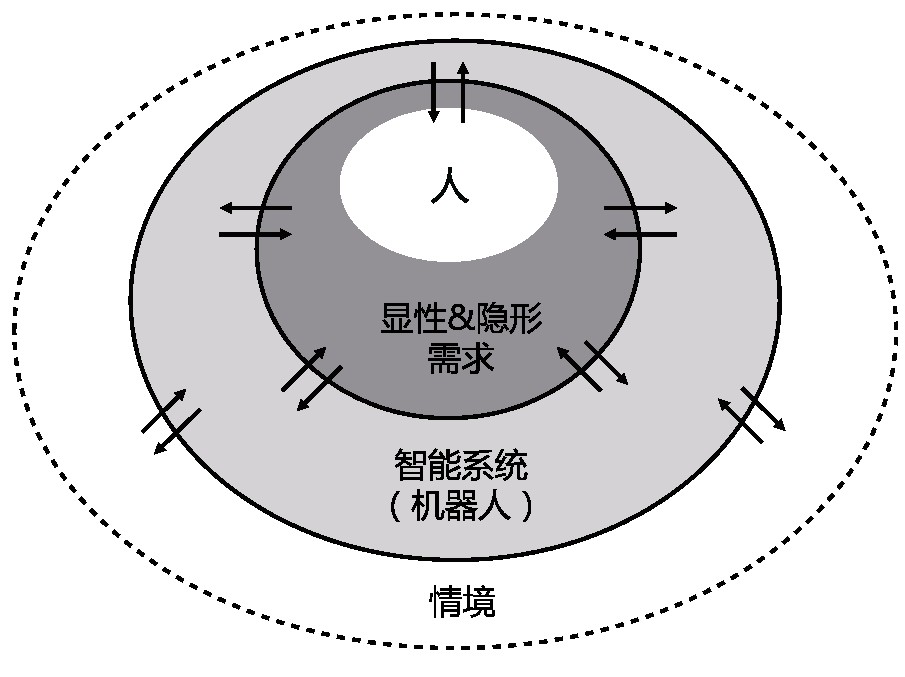

智能系统在智能制造、智慧城市、医疗健康、生活服务等各种场景中越来越广泛地存在,为应对人与智能系统的交互中所面临的诸多挑战,从技术和体验的视角分析人机智能协同中的关键问题。对从人机交互到人机智能协同的发展脉络与研究范围进行梳理,提出综合技术视角和体验视角的研究框架;从智能系统的特征出发,梳理出技术视角下人机智能协同所带来的新兴问题;从体验的视角探讨如何推动实现人机智能协同;在此基础上总结人机智能协同的发展趋势。总结了人机交互演进的三个阶段;提出了技术视角下人机智能协同的关键问题,包括人机能动性分配、动态学习和修正、情境自适应及主动响应模式;探讨了体验视角下人机智能协同的可解释性、信任问题、情感化及公平负责等问题;指出了人机智能协同全方位、多类型及体系化的发展趋势。[......]

智能系统在智能制造、智慧城市、医疗健康、生活服务等各种场景中越来越广泛地存在,为应对人与智能系统的交互中所面临的诸多挑战,从技术和体验的视角分析人机智能协同中的关键问题。对从人机交互到人机智能协同的发展脉络与研究范围进行梳理,提出综合技术视角和体验视角的研究框架;从智能系统的特征出发,梳理出技术视角下人机智能协同所带来的新兴问题;从体验的视角探讨如何推动实现人机智能协同;在此基础上总结人机智能协同的发展趋势。总结了人机交互演进的三个阶段;提出了技术视角下人机智能协同的关键问题,包括人机能动性分配、动态学习和修正、情境自适应及主动响应模式;探讨了体验视角下人机智能协同的可解释性、信任问题、情感化及公平负责等问题;指出了人机智能协同全方位、多类型及体系化的发展趋势。[......]继续阅读

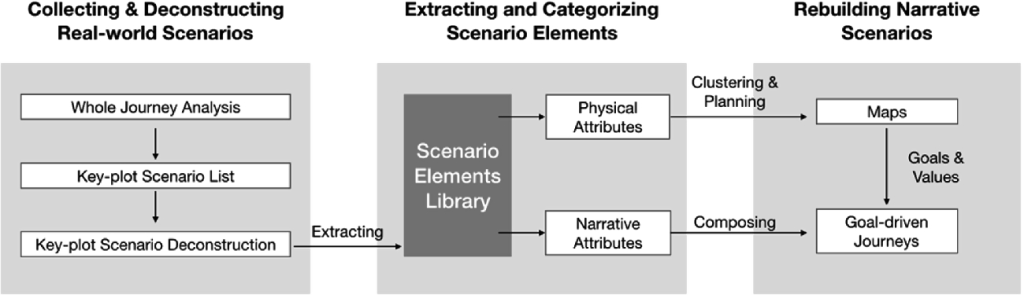

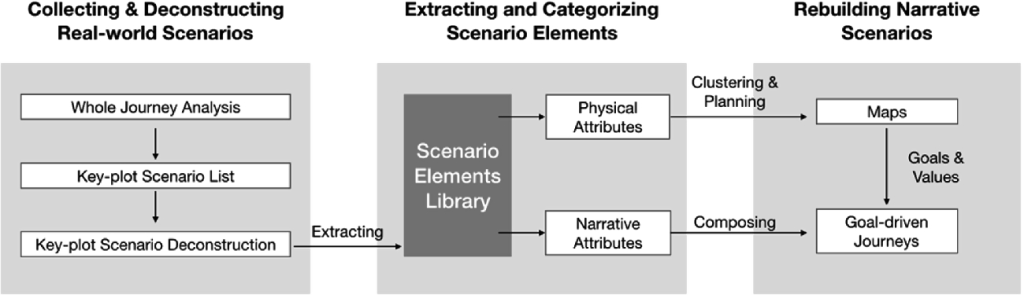

With the rapid development and application of autonomous vehicles, human-autonomous vehicle interaction (HAI) has recently gained importance. Simulation is an effective and efficient approach for the research and testing in the HAI domain, and scenarios are crucial to the validity and experience of HAI simulators. However, research on systematically building scenarios is still lacking. This paper proposes the concept of the narrative scenario in the HAI simulation, and a three-stage framework for building narrative scenarios is established. First, key-plot scenarios are collected from the whole journey analysis and deconstructed into elements. After extracted and categorized into a library, the physical and narrative attributes of scenario elements are defined. Finally, scenarios in simulators can be rebuilt in a narrative sequence driven by task goals.[......]

With the rapid development and application of autonomous vehicles, human-autonomous vehicle interaction (HAI) has recently gained importance. Simulation is an effective and efficient approach for the research and testing in the HAI domain, and scenarios are crucial to the validity and experience of HAI simulators. However, research on systematically building scenarios is still lacking. This paper proposes the concept of the narrative scenario in the HAI simulation, and a three-stage framework for building narrative scenarios is established. First, key-plot scenarios are collected from the whole journey analysis and deconstructed into elements. After extracted and categorized into a library, the physical and narrative attributes of scenario elements are defined. Finally, scenarios in simulators can be rebuilt in a narrative sequence driven by task goals.[......]继续阅读

This work investigates the integration of generative visual aids in human-robot task communication. We developed GenComUI, a system powered by large language models (LLMs) that dynamically generates contextual visual aids—such as map annotations, path indicators, and animations—to support verbal task communication and facilitate the generation of customized task programs for the robot. This system was informed by a formative study that examined how humans use external visual tools to assist verbal communication in spatial tasks. To evaluate its effectiveness, we conducted a user experiment (n = 20) comparing GenComUI with a voice-only baseline. The results demonstrate that generative visual aids, through both qualitative and quantitative analysis, enhance verbal task communication by providing continuous visual feedback, thus promoting natural and effective human-robot communication. Additionally, the study offers a set of design implications, emphasizing how dynamically generated visual aids can serve as an effective communication medium in human-robot interaction. These findings underscore the potential of generative visual aids to inform the design of more intuitive and effective human-robot communication, particularly for complex communication scenarios in human-robot interaction and LLM-based end-user development.[......]

This work investigates the integration of generative visual aids in human-robot task communication. We developed GenComUI, a system powered by large language models (LLMs) that dynamically generates contextual visual aids—such as map annotations, path indicators, and animations—to support verbal task communication and facilitate the generation of customized task programs for the robot. This system was informed by a formative study that examined how humans use external visual tools to assist verbal communication in spatial tasks. To evaluate its effectiveness, we conducted a user experiment (n = 20) comparing GenComUI with a voice-only baseline. The results demonstrate that generative visual aids, through both qualitative and quantitative analysis, enhance verbal task communication by providing continuous visual feedback, thus promoting natural and effective human-robot communication. Additionally, the study offers a set of design implications, emphasizing how dynamically generated visual aids can serve as an effective communication medium in human-robot interaction. These findings underscore the potential of generative visual aids to inform the design of more intuitive and effective human-robot communication, particularly for complex communication scenarios in human-robot interaction and LLM-based end-user development.[......]  End-user development allows everyday users to tailor service robots or applications to their needs. One user-friendly approach is natural language programming. However, it encounters challenges such as an expansive user expression space and limited support for debugging and editing, which restrict its application in end-user programming. The emergence of large language models (LLMs) offers promising avenues for the translation and interpretation between human language instructions and the code executed by robots, but their application in end-user programming systems requires further study. We introduce Cocobo, a natural language programming system with interactive diagrams powered by LLMs. Cocobo employs LLMs to understand users’ authoring intentions, generate and explain robot programs, and facilitate the conversion between executable code and flowchart representations. Our user study shows that Cocobo has a low learning curve, enabling even users with zero coding experience to customize robot programs successfully.

[......]

End-user development allows everyday users to tailor service robots or applications to their needs. One user-friendly approach is natural language programming. However, it encounters challenges such as an expansive user expression space and limited support for debugging and editing, which restrict its application in end-user programming. The emergence of large language models (LLMs) offers promising avenues for the translation and interpretation between human language instructions and the code executed by robots, but their application in end-user programming systems requires further study. We introduce Cocobo, a natural language programming system with interactive diagrams powered by LLMs. Cocobo employs LLMs to understand users’ authoring intentions, generate and explain robot programs, and facilitate the conversion between executable code and flowchart representations. Our user study shows that Cocobo has a low learning curve, enabling even users with zero coding experience to customize robot programs successfully.

[......]  Autonomous agents, including service robots, require adherence to moral values, legal regulations, and social norms to interact effectively with humans. A vital aspect of this is the acquisition of ownership relationships between humans and their carrying items, which leads to practical benefits and a deeper understanding of human social norms. The proposed framework enables the robots to learn item ownership relationships autonomously or through user interaction. The autonomous learning component is based on Human-Object Interaction (HOI) detection, through which the robot acquires knowledge of item ownership by recognizing correlations between human-object interactions. The interactive learning component allows for natural interaction between users and the robot, enabling users to demonstrate item ownership by presenting items to the robot. The learning process has been divided into four stages to address the challenges posed by changing item ownership in real-world scenarios. While many aspects of ownership relationship learning remain unexplored, this research aims to explore and design general approaches to item ownership learning in service robots concerning their applicability and robustness. In future work, we will evaluate the performance of the proposed framework through a case study.[......]

Autonomous agents, including service robots, require adherence to moral values, legal regulations, and social norms to interact effectively with humans. A vital aspect of this is the acquisition of ownership relationships between humans and their carrying items, which leads to practical benefits and a deeper understanding of human social norms. The proposed framework enables the robots to learn item ownership relationships autonomously or through user interaction. The autonomous learning component is based on Human-Object Interaction (HOI) detection, through which the robot acquires knowledge of item ownership by recognizing correlations between human-object interactions. The interactive learning component allows for natural interaction between users and the robot, enabling users to demonstrate item ownership by presenting items to the robot. The learning process has been divided into four stages to address the challenges posed by changing item ownership in real-world scenarios. While many aspects of ownership relationship learning remain unexplored, this research aims to explore and design general approaches to item ownership learning in service robots concerning their applicability and robustness. In future work, we will evaluate the performance of the proposed framework through a case study.[......]  This study investigated how drivers can manage the take-over when silent or alerted failure of automated lateral control occurs after monotonous hands-off driving with partial automation. Twenty-two drivers with varying levels of prior ADAS experience participated in the driving simulator experiment. The failures were injected into the driving scenario on curved road segments, accompanied by either a visual-auditory alert or no change in HMI. Results indicated that drivers could rarely maintain lane-keeping when automated steering was disabled silently, but most drivers safely managed the alerted failure situation within the ego-lane. The silent failure yielded significantly longer take-over time and generally worse lateral control quality. In contrast, poor longitudinal control performance was observed in alerted conditions due to more brake usage. An expert-based controllability assessment method was introduced to this study. The silent lateral failure situation during monotonous hands-off driving was rated as uncontrollable, while the alerted situation was basically controllable. Participants showed their preferences for the TORs, and the importance of conveying TOR reasons was also demonstrated. [......]

This study investigated how drivers can manage the take-over when silent or alerted failure of automated lateral control occurs after monotonous hands-off driving with partial automation. Twenty-two drivers with varying levels of prior ADAS experience participated in the driving simulator experiment. The failures were injected into the driving scenario on curved road segments, accompanied by either a visual-auditory alert or no change in HMI. Results indicated that drivers could rarely maintain lane-keeping when automated steering was disabled silently, but most drivers safely managed the alerted failure situation within the ego-lane. The silent failure yielded significantly longer take-over time and generally worse lateral control quality. In contrast, poor longitudinal control performance was observed in alerted conditions due to more brake usage. An expert-based controllability assessment method was introduced to this study. The silent lateral failure situation during monotonous hands-off driving was rated as uncontrollable, while the alerted situation was basically controllable. Participants showed their preferences for the TORs, and the importance of conveying TOR reasons was also demonstrated. [......]  Service robots have been applied in an increasing number of scenarios, including homes, hospitals, offices, schools, hotels, etc. To ensure the usability of the interaction interface and process in the application of service robots, the design of human-robot interaction in the application of service robots involves various interaction modalities, different robot forms, and different physical behaviors of robots in space, etc. This makes it challenging to have low-cost, high-fidelity prototype methods that support design exploration in the early stages of service robot application design.Currently, many rapid prototyping techniques have been applied to the design exploration stage in the design of service robot applications, such as paper prototypes, storyboards, video prototypes, etc. However, these methods have limitations, including low fidelity and being out of the environmental context. Researchers have also been exploring new prototype methods to meet the design exploration and testing requirements for HRI design. Some studies have explored the use of VR test HRI prototypes, and most of them focus on the technical aspect, and the performance of HRI capabilities. Other studies focus on specific HRI design aspects: interactive mechanism, anthropomorphic appearance, social acceptance, and so on. Approaches focusing on these aspects are not suitable for prototyping and testing the overall interaction scenarios of service robot applications.Based on the characteristics of virtual reality technology, this paper aims to explore how virtual reality technology can be used to support multi-user collaborative design of service robot applications in a virtual environment. To address this issue, this paper proposes a system for supporting collaborative design of service robot applications. The system framework and implementation will be described in detail in the paper. Specifically, the system enables multiple users (designers or stakeholders) to enter a virtual environment in real-time using head-mounted VR devices. Users can select appropriate environment model assets based on the target application scenario and perform bodystorming "on site". Using a modular robot building tool, users can add virtual robots to the space and add or delete functional components, as well as adjust the position, size, and orientation of the components. The system's Wizard-of-OZ module allows users to control the robot's movement and component status. The Graphic UI is embedded into the physical display of the robot via WebView and supports the simulation of the Graphic UI interaction process. After completing the initial application concept ideation and virtual robot design, users can use WoZ and Role-Playing techniques to perform the human-robot interaction process to evaluate and optimize the interaction design. In addition, the recorded video of the performance can also support subsequent design discussions.The system is implemented using the Unity game engine, and users interact with the system using the Oculus Quest headsets and controllers. The design activities case based on the system will be evaluated and discussed to analyze the strengths and weaknesses of the system. Further, we discuss the limitations of this work and the future research directions for supporting the design of service robot applications using virtual reality.[......]

Service robots have been applied in an increasing number of scenarios, including homes, hospitals, offices, schools, hotels, etc. To ensure the usability of the interaction interface and process in the application of service robots, the design of human-robot interaction in the application of service robots involves various interaction modalities, different robot forms, and different physical behaviors of robots in space, etc. This makes it challenging to have low-cost, high-fidelity prototype methods that support design exploration in the early stages of service robot application design.Currently, many rapid prototyping techniques have been applied to the design exploration stage in the design of service robot applications, such as paper prototypes, storyboards, video prototypes, etc. However, these methods have limitations, including low fidelity and being out of the environmental context. Researchers have also been exploring new prototype methods to meet the design exploration and testing requirements for HRI design. Some studies have explored the use of VR test HRI prototypes, and most of them focus on the technical aspect, and the performance of HRI capabilities. Other studies focus on specific HRI design aspects: interactive mechanism, anthropomorphic appearance, social acceptance, and so on. Approaches focusing on these aspects are not suitable for prototyping and testing the overall interaction scenarios of service robot applications.Based on the characteristics of virtual reality technology, this paper aims to explore how virtual reality technology can be used to support multi-user collaborative design of service robot applications in a virtual environment. To address this issue, this paper proposes a system for supporting collaborative design of service robot applications. The system framework and implementation will be described in detail in the paper. Specifically, the system enables multiple users (designers or stakeholders) to enter a virtual environment in real-time using head-mounted VR devices. Users can select appropriate environment model assets based on the target application scenario and perform bodystorming "on site". Using a modular robot building tool, users can add virtual robots to the space and add or delete functional components, as well as adjust the position, size, and orientation of the components. The system's Wizard-of-OZ module allows users to control the robot's movement and component status. The Graphic UI is embedded into the physical display of the robot via WebView and supports the simulation of the Graphic UI interaction process. After completing the initial application concept ideation and virtual robot design, users can use WoZ and Role-Playing techniques to perform the human-robot interaction process to evaluate and optimize the interaction design. In addition, the recorded video of the performance can also support subsequent design discussions.The system is implemented using the Unity game engine, and users interact with the system using the Oculus Quest headsets and controllers. The design activities case based on the system will be evaluated and discussed to analyze the strengths and weaknesses of the system. Further, we discuss the limitations of this work and the future research directions for supporting the design of service robot applications using virtual reality.[......]  Explanations have become increasingly vital in communicating with human drivers about the reasons for the decision-making of autonomous vehicles (AVs), particularly in tactical-level driving tasks. Focusing on lane-changing scenarios, we examine whether providing tactical-level explanations and in addition, whether providing a confirmation option, influences drivers’ decision-making, trust, and emotional experience. Thirty participants were equally assigned into three groups: indicator (I), explanation (E), and explanation + confirmation (EC), experiencing four lane-changing scenarios in a driving simulator. Real-time question probes and interviews were adopted to understand drivers’ decision-making process, and post-drive questionnaires on trust and emotional experience were given. Results indicated that merely providing tactical-level explanations had little effect on driver’s trust and experience, but caused worse decision-making performance. The option to confirm lane changes after an explanation promoted driver’s trust, but brought two-sided effects on decision-making performance. Situational trust and decision-making performance varied significantly across lane-changing scenarios.[......]

Explanations have become increasingly vital in communicating with human drivers about the reasons for the decision-making of autonomous vehicles (AVs), particularly in tactical-level driving tasks. Focusing on lane-changing scenarios, we examine whether providing tactical-level explanations and in addition, whether providing a confirmation option, influences drivers’ decision-making, trust, and emotional experience. Thirty participants were equally assigned into three groups: indicator (I), explanation (E), and explanation + confirmation (EC), experiencing four lane-changing scenarios in a driving simulator. Real-time question probes and interviews were adopted to understand drivers’ decision-making process, and post-drive questionnaires on trust and emotional experience were given. Results indicated that merely providing tactical-level explanations had little effect on driver’s trust and experience, but caused worse decision-making performance. The option to confirm lane changes after an explanation promoted driver’s trust, but brought two-sided effects on decision-making performance. Situational trust and decision-making performance varied significantly across lane-changing scenarios.[......]  Benefit from the progress in the field of explainable artificial intelligence (XAI), explanations have been increasingly prospective in the autonomous vehicle (AV) context. Providing explanations has been proved to be vital for human-AV interaction, but what and how to explain are still to be addressed. This study seeks to bridge the areas of XAI and human-AV interaction by combining perspectives of both users and researchers. In this paper, a conceptual framework of explanation models was proposed to indicate what aspects to explain in human-AV interaction. Based on the framework, we introduced a scenario-based and question-driven method, i.e., the SQX-canvas, to guide the workflow of generating explanations from users’ demands in a certain AV scenario. To make an initial validation of the method, a co-design workshop involving researchers and users was conducted with four AV scenarios provided in forms of video clips. Participants produced explanation concepts and expressed their attitudes towards the AV scenarios following the “scenario, question and explanation” process. It was apparent that users’ demands of explanations varied across scenarios, and findings as well as limitations were discussed. This method could provide implications for research and practice on facilitating transparent human-AV interaction.[......]

Benefit from the progress in the field of explainable artificial intelligence (XAI), explanations have been increasingly prospective in the autonomous vehicle (AV) context. Providing explanations has been proved to be vital for human-AV interaction, but what and how to explain are still to be addressed. This study seeks to bridge the areas of XAI and human-AV interaction by combining perspectives of both users and researchers. In this paper, a conceptual framework of explanation models was proposed to indicate what aspects to explain in human-AV interaction. Based on the framework, we introduced a scenario-based and question-driven method, i.e., the SQX-canvas, to guide the workflow of generating explanations from users’ demands in a certain AV scenario. To make an initial validation of the method, a co-design workshop involving researchers and users was conducted with four AV scenarios provided in forms of video clips. Participants produced explanation concepts and expressed their attitudes towards the AV scenarios following the “scenario, question and explanation” process. It was apparent that users’ demands of explanations varied across scenarios, and findings as well as limitations were discussed. This method could provide implications for research and practice on facilitating transparent human-AV interaction.[......]  随着现代人工智能技术在自动驾驶系统中的广泛应用, 其可解释性问题日益凸显, 为此探讨人-无人车交互过程中的可解释性交互的框架以及设计要素等问题, 以增强自动驾驶系统的决策透明性, 安全性和用户信任度. 结合可解释人工智能和人机交互的基本理论与方法, 本文首先介绍了可解释性人工智能, 对当前可解释内容的提取方法进行总结, 然后以人-机器人交互的透明度模型为基础, 建立人-无人车交互中可解释性交互的框架. 最后从解释的对象, 方式和评价等多个设计维度对可解释性的交互设计问题进行探讨, 并结合案例进行分析. 可解释性作为人与模型决策之间的接口, 不仅仅是一个人工智能技术问题, 而且与人密切相关, 涉及到人-无人车交互中的多个层次. 本文提出人-无人车交互中可解释性交互的框架, 得出在人-无人车交互每个阶段需要的解释内容以及可解释交互设计的要素.[......]

随着现代人工智能技术在自动驾驶系统中的广泛应用, 其可解释性问题日益凸显, 为此探讨人-无人车交互过程中的可解释性交互的框架以及设计要素等问题, 以增强自动驾驶系统的决策透明性, 安全性和用户信任度. 结合可解释人工智能和人机交互的基本理论与方法, 本文首先介绍了可解释性人工智能, 对当前可解释内容的提取方法进行总结, 然后以人-机器人交互的透明度模型为基础, 建立人-无人车交互中可解释性交互的框架. 最后从解释的对象, 方式和评价等多个设计维度对可解释性的交互设计问题进行探讨, 并结合案例进行分析. 可解释性作为人与模型决策之间的接口, 不仅仅是一个人工智能技术问题, 而且与人密切相关, 涉及到人-无人车交互中的多个层次. 本文提出人-无人车交互中可解释性交互的框架, 得出在人-无人车交互每个阶段需要的解释内容以及可解释交互设计的要素.[......]  智能系统在智能制造、智慧城市、医疗健康、生活服务等各种场景中越来越广泛地存在,为应对人与智能系统的交互中所面临的诸多挑战,从技术和体验的视角分析人机智能协同中的关键问题。对从人机交互到人机智能协同的发展脉络与研究范围进行梳理,提出综合技术视角和体验视角的研究框架;从智能系统的特征出发,梳理出技术视角下人机智能协同所带来的新兴问题;从体验的视角探讨如何推动实现人机智能协同;在此基础上总结人机智能协同的发展趋势。总结了人机交互演进的三个阶段;提出了技术视角下人机智能协同的关键问题,包括人机能动性分配、动态学习和修正、情境自适应及主动响应模式;探讨了体验视角下人机智能协同的可解释性、信任问题、情感化及公平负责等问题;指出了人机智能协同全方位、多类型及体系化的发展趋势。[......]

智能系统在智能制造、智慧城市、医疗健康、生活服务等各种场景中越来越广泛地存在,为应对人与智能系统的交互中所面临的诸多挑战,从技术和体验的视角分析人机智能协同中的关键问题。对从人机交互到人机智能协同的发展脉络与研究范围进行梳理,提出综合技术视角和体验视角的研究框架;从智能系统的特征出发,梳理出技术视角下人机智能协同所带来的新兴问题;从体验的视角探讨如何推动实现人机智能协同;在此基础上总结人机智能协同的发展趋势。总结了人机交互演进的三个阶段;提出了技术视角下人机智能协同的关键问题,包括人机能动性分配、动态学习和修正、情境自适应及主动响应模式;探讨了体验视角下人机智能协同的可解释性、信任问题、情感化及公平负责等问题;指出了人机智能协同全方位、多类型及体系化的发展趋势。[......]  With the rapid development and application of autonomous vehicles, human-autonomous vehicle interaction (HAI) has recently gained importance. Simulation is an effective and efficient approach for the research and testing in the HAI domain, and scenarios are crucial to the validity and experience of HAI simulators. However, research on systematically building scenarios is still lacking. This paper proposes the concept of the narrative scenario in the HAI simulation, and a three-stage framework for building narrative scenarios is established. First, key-plot scenarios are collected from the whole journey analysis and deconstructed into elements. After extracted and categorized into a library, the physical and narrative attributes of scenario elements are defined. Finally, scenarios in simulators can be rebuilt in a narrative sequence driven by task goals.[......]

With the rapid development and application of autonomous vehicles, human-autonomous vehicle interaction (HAI) has recently gained importance. Simulation is an effective and efficient approach for the research and testing in the HAI domain, and scenarios are crucial to the validity and experience of HAI simulators. However, research on systematically building scenarios is still lacking. This paper proposes the concept of the narrative scenario in the HAI simulation, and a three-stage framework for building narrative scenarios is established. First, key-plot scenarios are collected from the whole journey analysis and deconstructed into elements. After extracted and categorized into a library, the physical and narrative attributes of scenario elements are defined. Finally, scenarios in simulators can be rebuilt in a narrative sequence driven by task goals.[......]