人-机器人 & 人-载具交互/ HRI & HMI

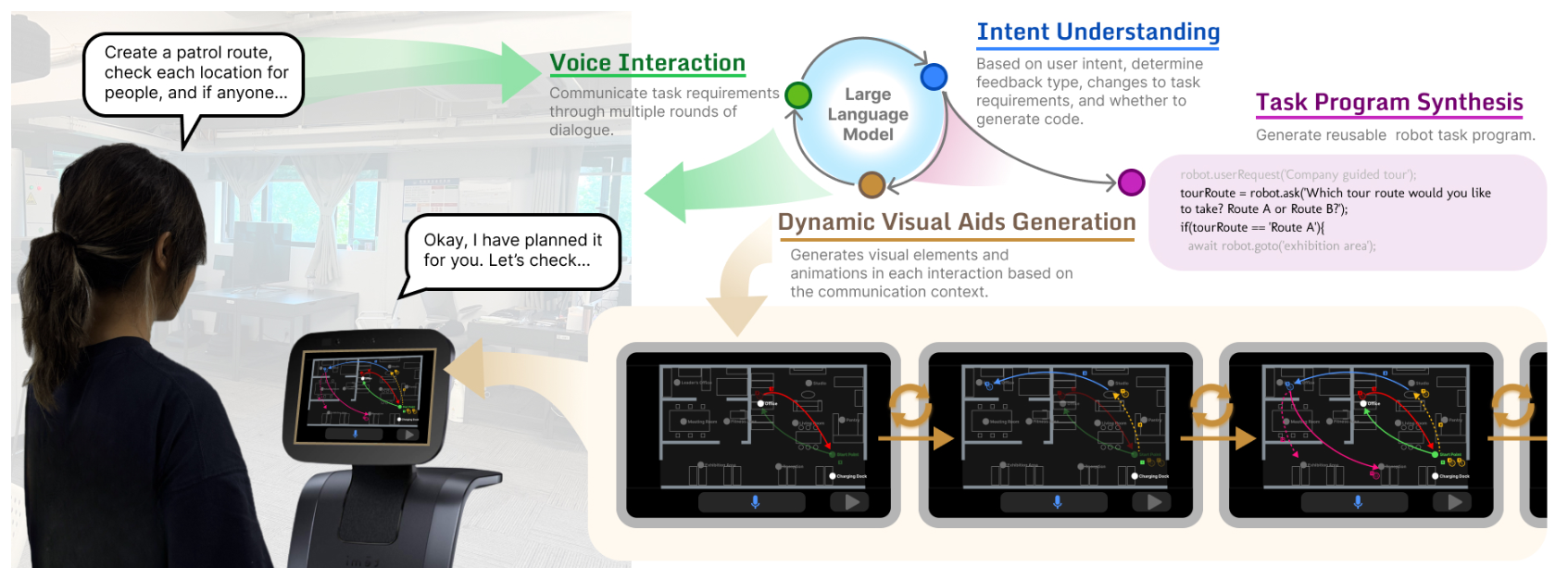

GenComUI: Exploring Generative Visual Aids as Medium to Support Task-Oriented Human-Robot Communication

This work investigates the integration of generative visual aids in human-robot task communication. We developed GenComUI, a system powered by large language models (LLMs) that dynamically generates contextual visual aids—such as map annotations, path indicators, and animations—to support verbal task communication and facilitate the generation of customized task programs for

Cocobo: Exploring Large Language Models as the Engine for End-User Robot Programming

End-user development allows everyday users to tailor service robots or applications to their needs. One user-friendly approach is natural language programming. However, it encounters challenges such as an expansive user expression space and limited support for debugging and editing, which restrict its application in end-user programming. The emergence of large

An Interactive Learning Framework forItem Ownership Relationship inService Robots

Autonomous agents, including service robots, require adherence to moral values, legal regulations, and social norms to interact effectively with humans. A vital aspect of this is the acquisition of ownership relationships between humans and their carrying items, which leads to practical benefits and a deeper understanding of human social norms.

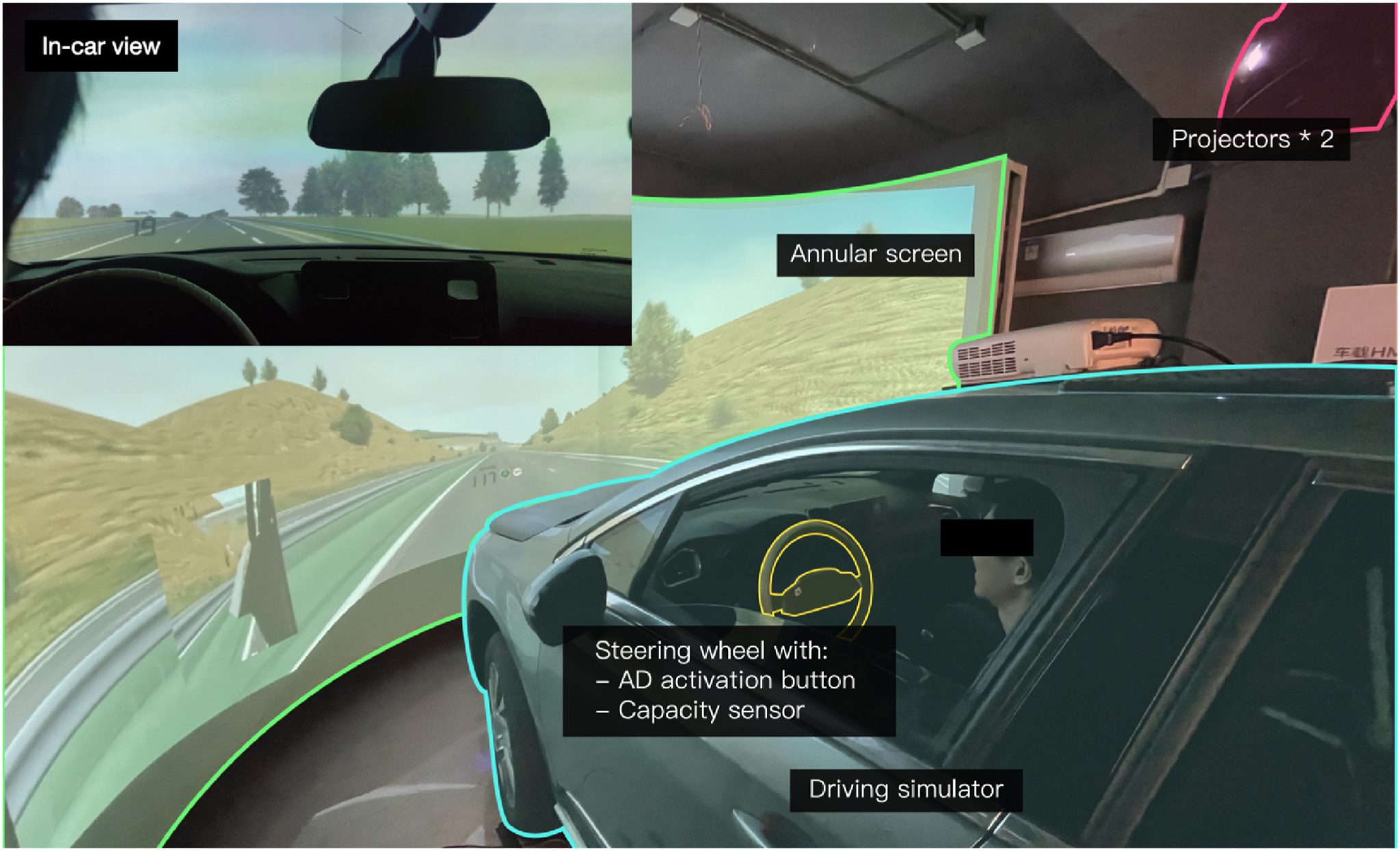

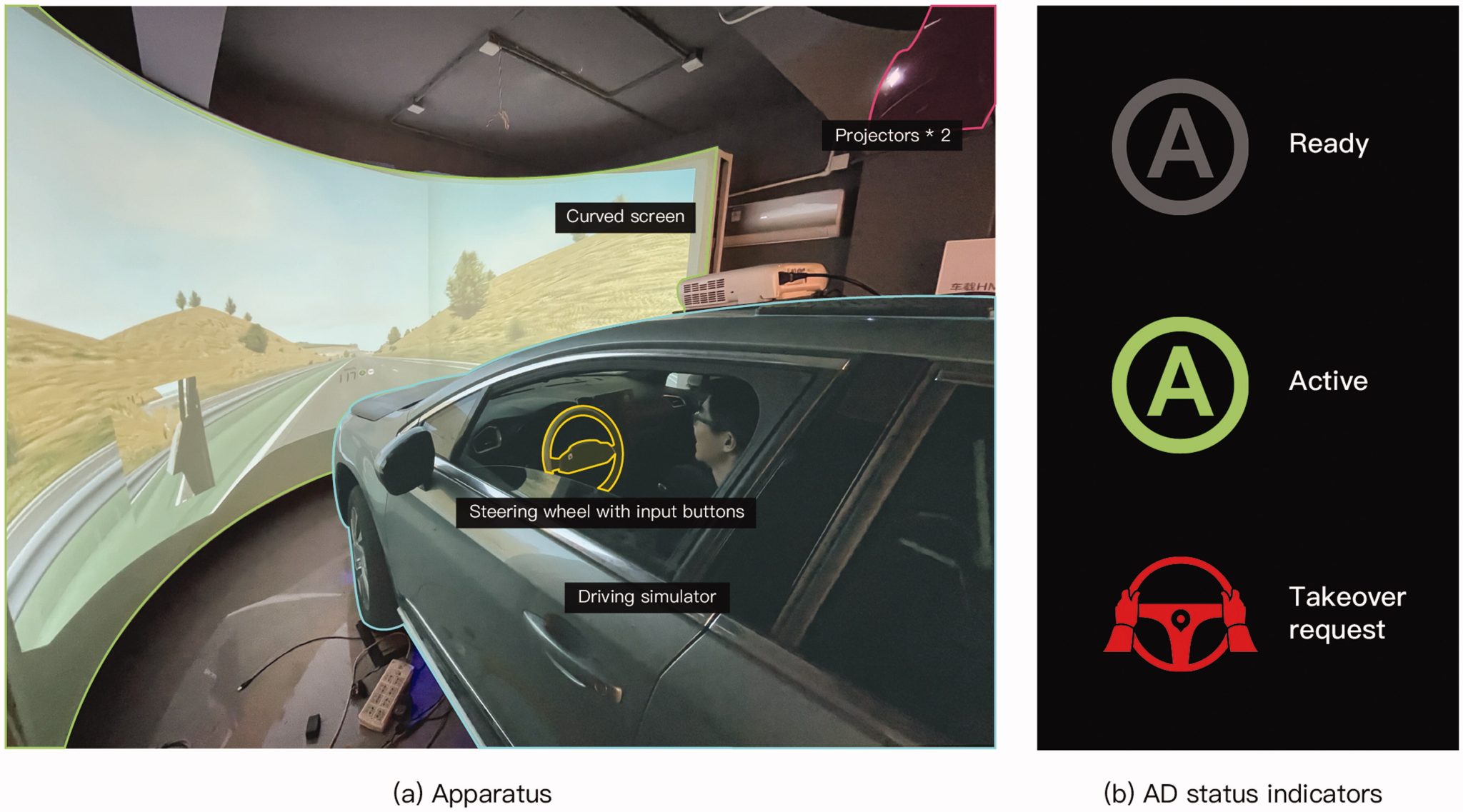

Keeping in the lane! Investigating drivers’ performance handling silent vs. alerted lateral control failures in monotonous partially automated driving

This study investigated how drivers can manage the take-over when silent or alerted failure of automated lateral control occurs after monotonous hands-off driving with partial automation. Twenty-two drivers with varying levels of prior ADAS experience participated in the driving simulator experiment. The failures were injected into the driving scenario on

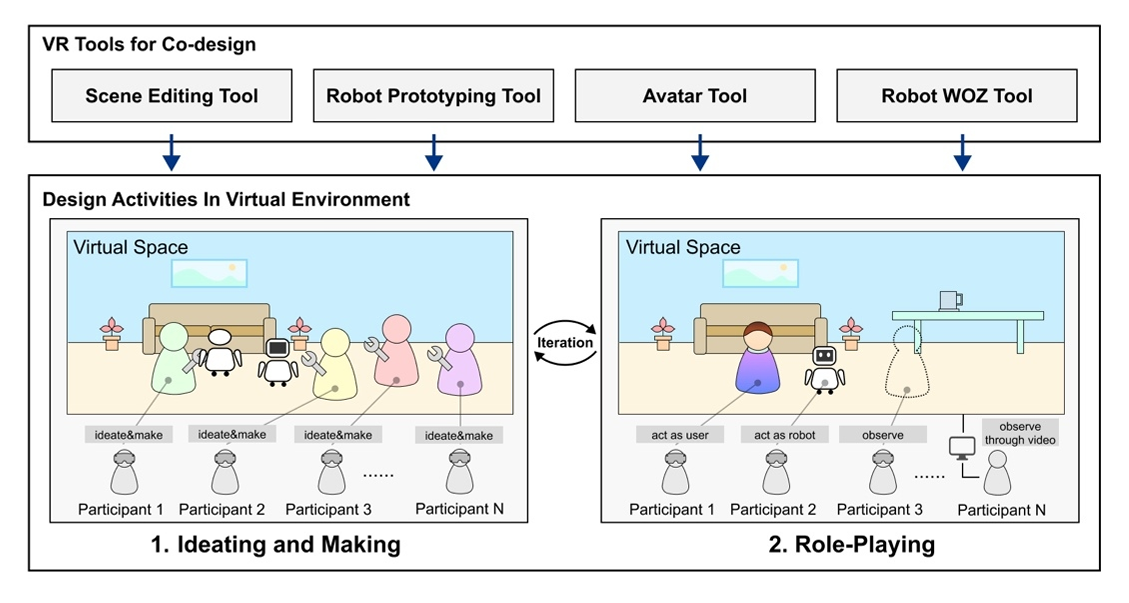

Co-Design of Service Robot Applications Using Virtual Reality

Service robots have been applied in an increasing number of scenarios, including homes, hospitals, offices, schools, hotels, etc. To ensure the usability of the interaction interface and process in the application of service robots, the design of human-robot interaction in the application of service robots involves various interaction modalities, different

Tactical-Level Explanation is Not Enough: Effect of Explaining AV’s Lane-Changing Decisions on Drivers’ Decision-Making, Trust, and Emotional Experience

Explanations have become increasingly vital in communicating with human drivers about the reasons for the decision-making of autonomous vehicles (AVs), particularly in tactical-level driving tasks. Focusing on lane-changing scenarios, we examine whether providing tactical-level explanations and in addition, whether providing a confirmation option, influences drivers’ decision-making, trust, and emotional experience.

Towards Scenario-Based and Question-Driven Explanations in Autonomous Vehicles

Benefit from the progress in the field of explainable artificial intelligence (XAI), explanations have been increasingly prospective in the autonomous vehicle (AV) context. Providing explanations has been proved to be vital for human-AV interaction, but what and how to explain are still to be addressed. This study seeks to bridge

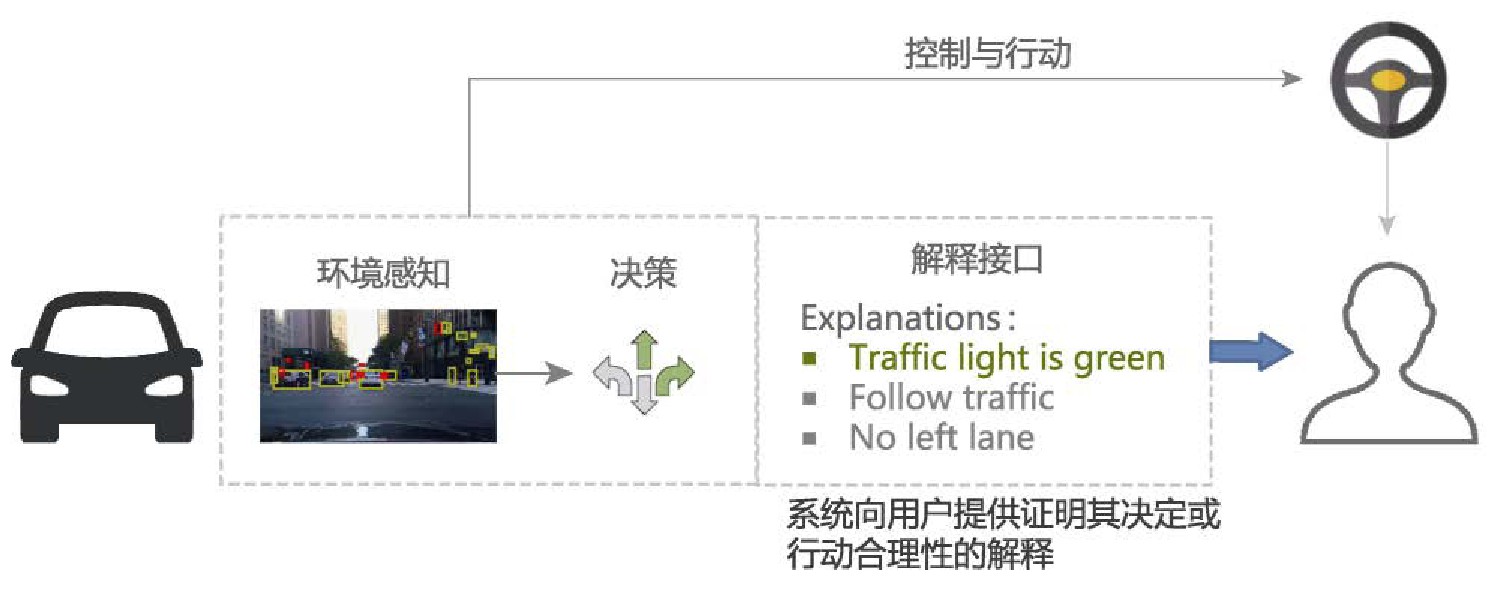

人-无人车交互中的可解释性交互研究

随着现代人工智能技术在自动驾驶系统中的广泛应用, 其可解释性问题日益凸显, 为此探讨人-无人车交互过程中的可解释性交互的框架以及设计要素等问题, 以增强自动驾驶系统的决策透明性, 安全性和用户信任度. 结合可解释人工智能和人机交互的基本理论与方法, 本文首先介绍了可解释性人工智能, 对当前可解释内容的提取方法进行总结, 然后以人-机器人交互的透明度模型为基础, 建立人-无人车交互中可解释性交互的框架. 最后从解释的对象, 方式和评价等多个设计维度对可解释性的交互设计问题进行探讨, 并结合案例进行分析. 可解释性作为人与模型决策之间的接口, 不仅仅是一个人工智能技术问题, 而且与人密切相关, 涉及到人-无人车交互中的多个层次. 本文提出人-无人车交互中可解释性交互的框架, 得出在人-无人车交互每个阶段需要的解释内容以及可解释交互设计的要素.

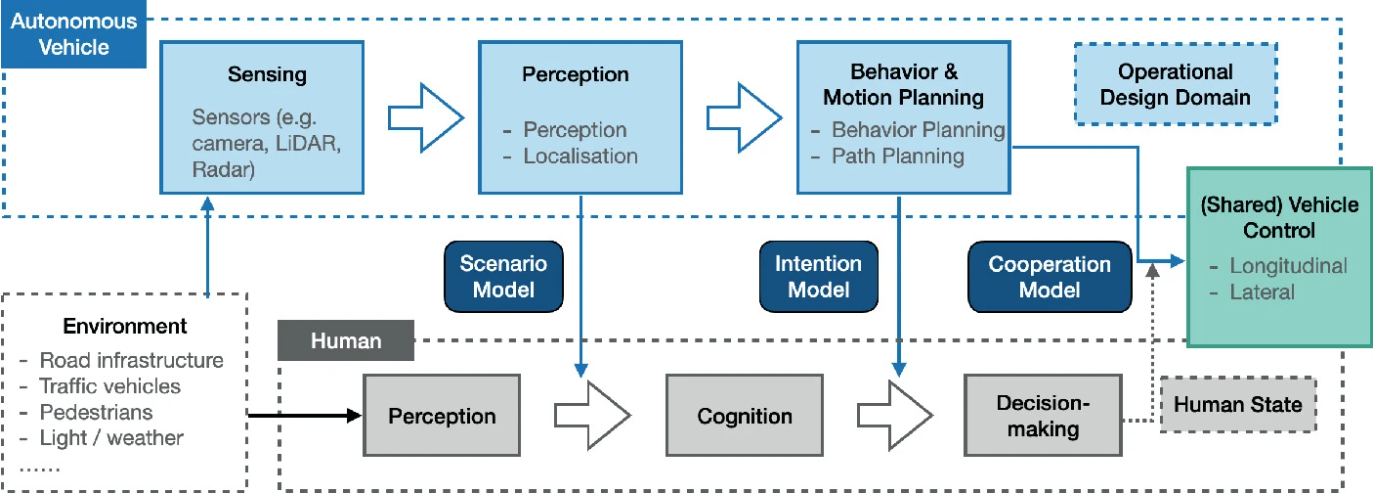

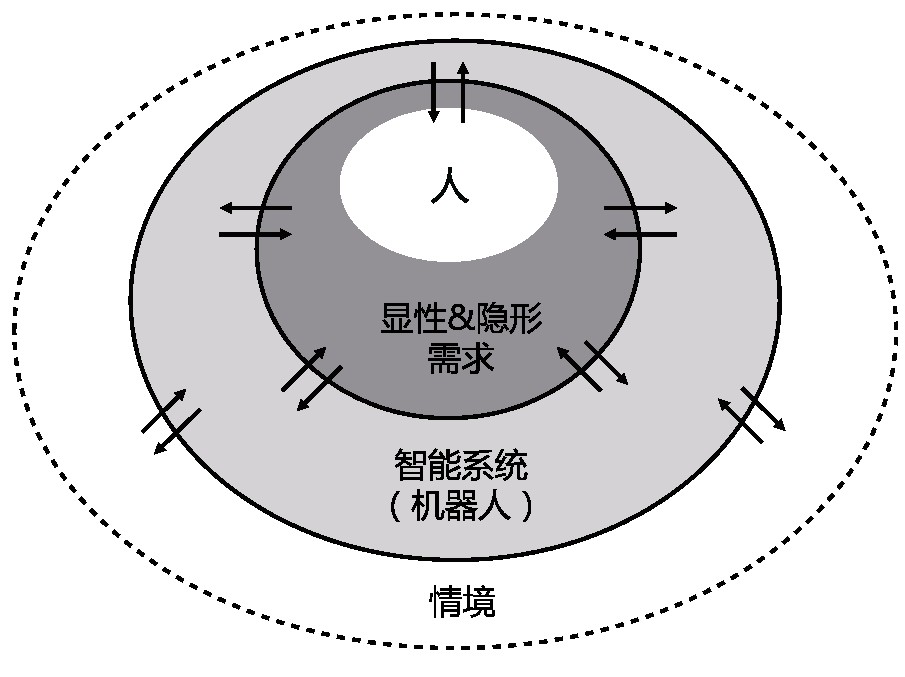

人机智能协同研究综述

智能系统在智能制造、智慧城市、医疗健康、生活服务等各种场景中越来越广泛地存在,为应对人与智能系统的交互中所面临的诸多挑战,从技术和体验的视角分析人机智能协同中的关键问题。对从人机交互到人机智能协同的发展脉络与研究范围进行梳理,提出综合技术视角和体验视角的研究框架;从智能系统的特征出发,梳理出技术视角下人机智能协同所带来的新兴问题;从体验的视角探讨如何推动实现人机智能协同;在此基础上总结人机智能协同的发展趋势。总结了人机交互演进的三个阶段;提出了技术视角下人机智能协同的关键问题,包括人机能动性分配、动态学习和修正、情境自适应及主动响应模式;探讨了体验视角下人机智能协同的可解释性、信任问题、情感化及公平负责等问题;指出了人机智能协同全方位、多类型及体系化的发展趋势。

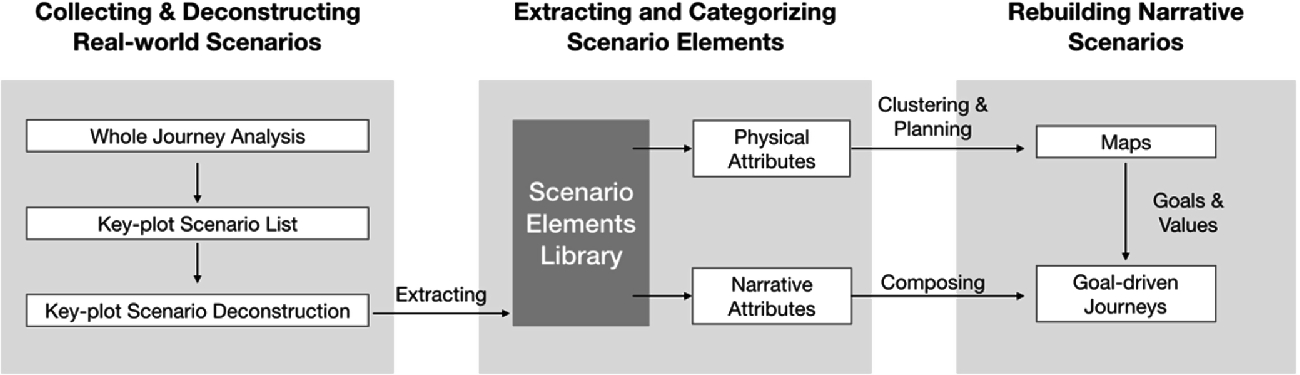

Building Narrative Scenarios for Human-Autonomous Vehicle Interaction Research in Simulators

With the rapid development and application of autonomous vehicles, human-autonomous vehicle interaction (HAI) has recently gained importance. Simulation is an effective and efficient approach for the research and testing in the HAI domain, and scenarios are crucial to the validity and experience of HAI simulators. However, research on systematically building

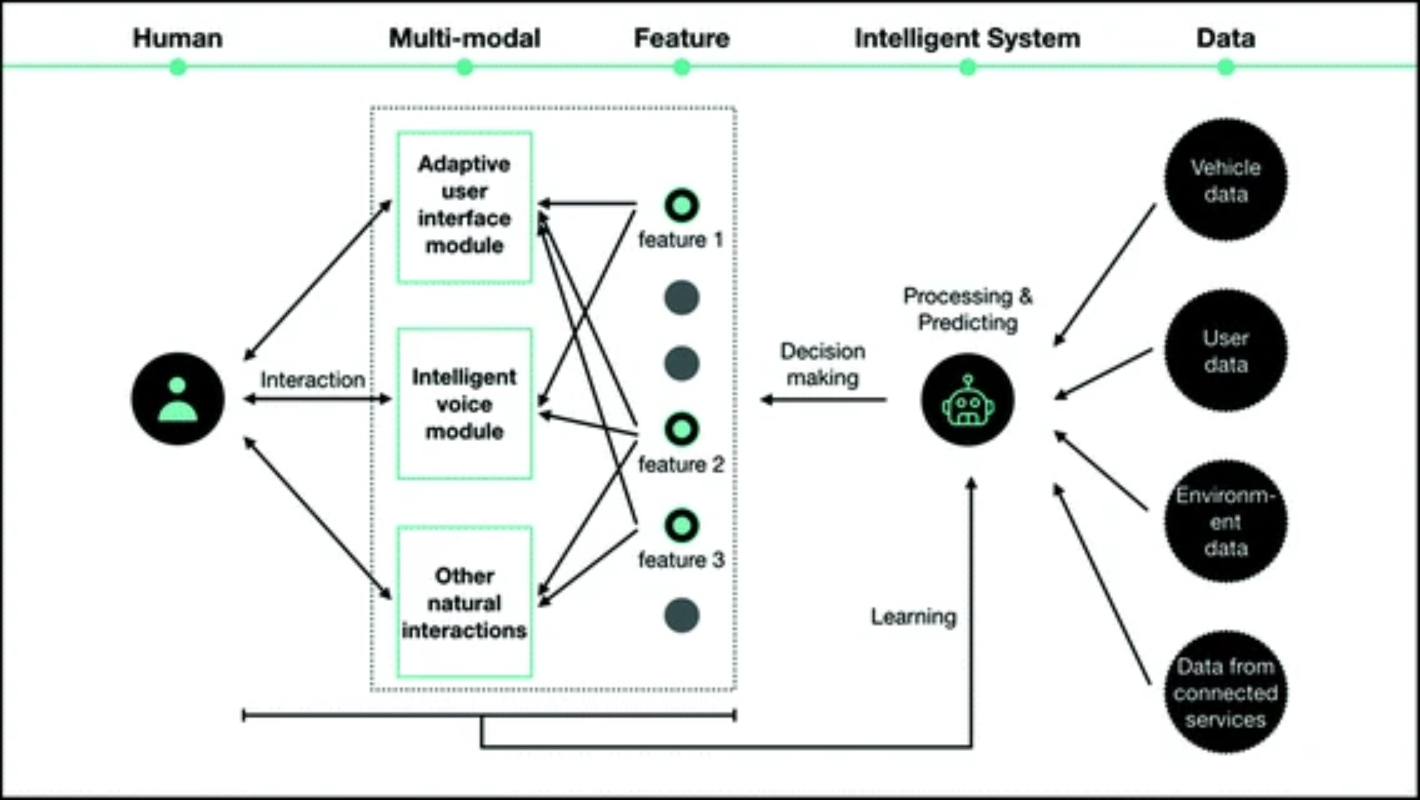

基于智能交互的汽车主动响应式交互设计

通过引入主动式 HMI,车辆可以预测用户的意图并启动功能,从而减少干扰,增强灵性,提高驾驶安全性和用户体验。通过主动式 HMI,使用意图预测模型的准确性机制层面成为影响主动HMI体验质量的关键。然而,缺乏有效的手段来提高用户预测模型的准确性,并且相关研究还不够充分。智能交互是提高工作效率的有效方法机器人的性能。通过将该技术引入到主动响应式的设计中通过交互,可以获得用户的意图,有助于突破当前算法的瓶颈。提出一种基于智能的汽车主动响应式交互设计框架交互并利用智能交互提高预测精度,是预测的关键点还列出了值得关注的地方,并举例说明了具体的设计案例。

From hmi to hri: Human-vehicle interaction design for smart cockpit

HMI is used to refer to human-vehicle interaction design from the perspective of taking car as a machine. However, with the quick increase of demand for smart cockpit, it would put strong constraints to the design of intelligent interactions and connected services if we still design from the perspective of